What is Email A/B Testing?

A/B testing allows you to turn your marketing ideas into actionable insights to share with your team.

What is A/B Testing and Why Should Marketers Care?

Want to have a better understanding of what your audience is engaging with? What attracts their attention and gets them to interact with your latest email? If you’re tired of making email marketing decisions based on ‘hunches’ then A/B testing might be exactly what's in order.

A/B testing allows you to turn your marketing gut feelings and ideas into actionable insights to share with your team. The tests themselves can vary in complexity, simple A/B tests can include sending multiple subject lines, and more advanced A/B tests can include completely different email templates from each other. All to see which one generates more opens and click-throughs.

Once the emails have been sent and the data starts coming in and the consistent winning version has been found you can adapt your email marketing towards that and can start amending your future marketing campaigns to be more geared towards your audience.

SpotlerCRM

Use our CRM for A/B Testing - take a 14 day trial

How to Set Up an Email A/B Test

The best practice for running A/B tests is to only have one moving part. If you want to get accurate insights to share with the rest of your marketing team, invest in planning and analysing. Here are some tips on how you can run a successful A/B test for your future campaigns.

Pick the Variable

When picking the variable for your A/B tests make sure you only have one variable changing each time. If there is more than one difference between your controls and variable emails, you won't know what change impacted the campaign. Isolating your email A/B tests may feel a bit slower, but you’ll be able to make informed decisions.

9 Email Components You Can Test

When you first start thinking about what one variable you might be able to change—from design to timing and more—your mind may go blank. But here is a quick list of nine common email components for the A/B test.

1. From Name

When you see your email notifications, one of the first things you see is who sent it. While you can experiment with this if you want, make sure it’s always clear that it’s from your company. Here are some examples that Mailchimp uses; “Mailchimp”, “Jenn at Mailchimp” and “Mailchimp Research”.

2. Subject Line

The subject line is the most common place to start with email A/B tests, it’s an aspect of emails heavily linked with open rates. You can experiment with different styles, lengths, tones, emojis and positioning.

3. Preview Text

With the subject line taking the lead in enticing subscribers to open an email, it’s not the only option you have. The three main levers to get someone to open your email— are the form name, subject line and preview text

4. HTML vs. Plain Text

Time to see if the grass is always greener on the other side. If you mostly send plain text emails or HTML ones, this is the time to test the process.

5. Length

In addition to the basic design of an email, you can also vary the length of the email.

Ask yourself:

Do subscribers want more content and context in the message, or just enough to gain some interest?

What lengths are ideal for different types of emails and/or devices?

Do all database segments prefer the same length of email?

6. Personalisation

According to the 2020 State of an Email report, the most popular way to personalise emails is by including the recipient's name. While this method can increase clicks, you can expand the personalisation horizons.

Other factors you can use for A/B testing include the subscriber's customer status, past interactions with your site, purchase history and emails they’ve interacted with.

7. Timing

Most email A/B tests focus on the contents of an email, but you can also test when to send it. You could adjust the time of day to see when you get the most interactions if it's the morning, midday or afternoon.

8. Copy

The voice and positioning of the copy in emails impact the message and can catch a reader's interest or not. A/B testing in the “copy” category covers elements in the email:

9. Imagery

Like using pretty pictures? If you stylise your emails try A/B testing your campaigns. Different hero images, breaking up content or using different colour schemes or GIFs. Does including infographics in an email make people more likely to interact with it?

3 Tips for Running More Effective A/B Tests

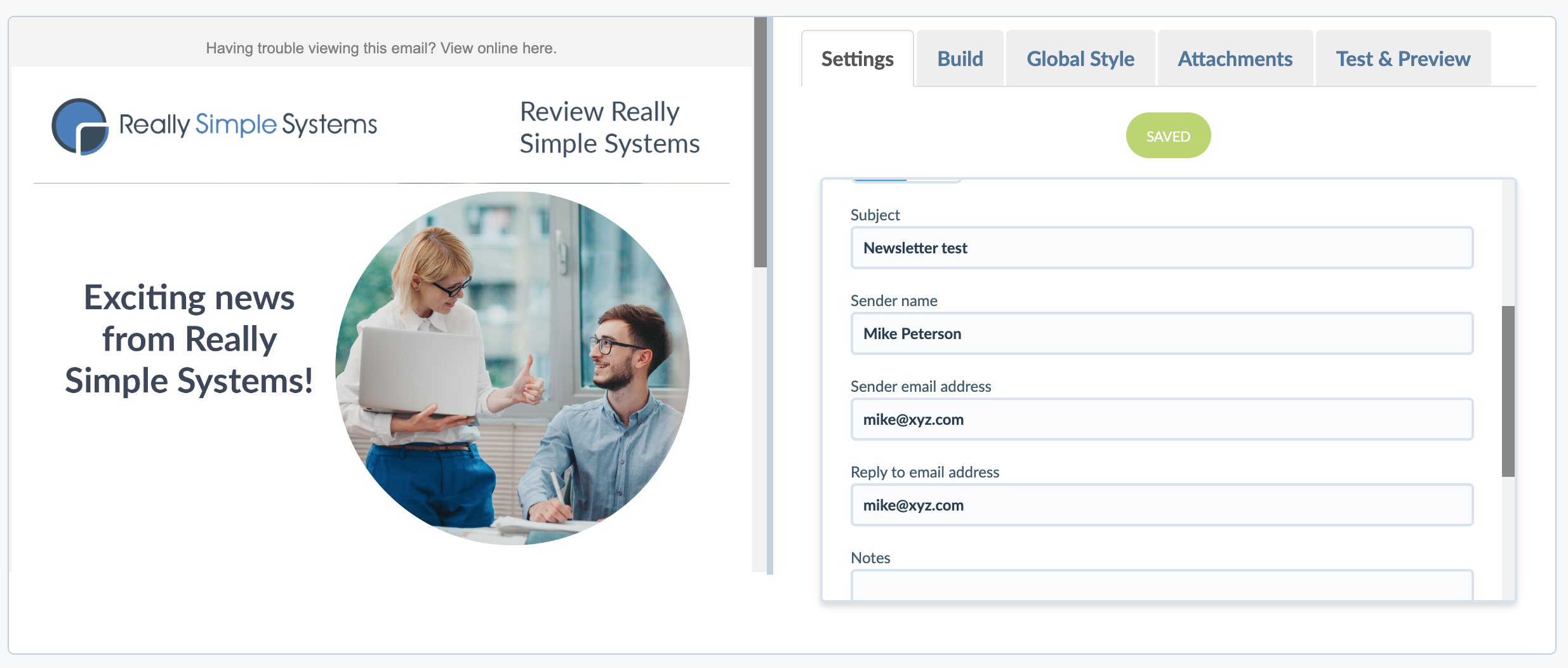

SpotlerCRM email builder, with the drag-and-drop block feature, makes it quick and easy to build and run A/B email campaigns. Instead of having to code multiple versions of the email and test them across different devices and email clients - you simply make the changes you want.

Before you dive in and start setting up A/B tests and campaigns there are a few strategic tips you can use to help increase the chances of getting success.

1. Have a Hypothesis

Referring back to the ‘Choosing your Objective’ section in this article you need to have a strategic hypothesis about why a particular variation might perform better. A way to best come up with a basic hypothesis for the test goes as such:

We believe personalising the subject line with the subscriber’s first name will help make our campaign stand out in the inbox and increase the chance it will get opened.

We believe using a button instead of just a text link will make the call to action stand out in the email, drawing the reader’s attention and getting more people to click through.

These two examples are great for when you need to pitch the use of an A/B test or when presenting the results of the test. As long as you have a reasoning and a direct plan on how best to focus the A/B test and how you achieve monitoring it the results will speak for themselves.

2. Prioritise Your A/B Tests

Not all A/B tests are equal, some you might want to prioritise over others, such as subject lines over button colours.

For each A/B test idea you have, quickly (even just in your head) assess it against the 3 elements of the ICE score to help you grade each idea and prioritise which ones you should execute first.

3. Build on Your Learnings

Not every A/B test will result in positive increases. Some of the results on the whole may even show negative effects. The key is to then use that data to then create better campaigns.

For example: If you run a set of A/B email campaigns over the course of 3 months and for every campaign you compare the data from the emails and as a collective as a whole. From that data and feedback, you can then apply it moving forward.

Analyse the Results

Once it’s time to analyse the results, you’ll be able to use every moment that you spent planning and building the email campaigns to further improve. As you went in with a clear idea, you know what to look out for once the campaign is finished. Now compare results and share them with your team.

Unless you want to test it further, you don’t have to re-test the campaign, but the changes you have might’ve been boosted by the “shiny new factor.” If you want to confirm the first test results, you can run a similar test to see if the learnings remain true!

Wrap Up

Get started on A/B email campaigns. Create a hypothesis for how you could improve your campaigns, set up your test, and send and monitor what happens. You may see an increase, or decrease in opens, and click-throughs. You’ll learn something about your audience that can create better campaigns in the future.

When you want to start your own A/B email campaign just remember:

Have a hypothesis

Pick the variable

Prioritise A/B tests

Analyse the results

Really Simple Systems is now Spotler CRM

The same great technology, a CRM platform that is focused on the needs of B2B marketers, provided by the same great team, at a great price!